AUGUST 18, 2018

In a casual conversation about the recent developments in the Artificial Intelligence (AI) world, Animesh Karnewar’s mother posed a question: “Can AI do something for me?” An avid reader, she wanted to know whether her son — an AI engineer at Mobiliya — could develop an algorithm that could generate images of people based on their descriptions in novels.

Karnewar was intrigued and promised her to try. “It was a very cool problem with an interesting application,” says the 23-year-old. The relatability of the challenge appealed to him: he had the same issue imagining how Rachel, the protagonist of Paula Hawkins’ The Girl on The Train, looked.

Machines that argue

The result of the Pune-based engineer’s three-month experiment is entitled ‘Text to Face’ (T2F), which relies on a concept called Generative Adversarial Networks (GANs), introduced in 2014 by machine learning scholar Ian Goodfellow. GANs consist of two networks — one called the generator, and the other the discriminator — which are pitted against each other. The discriminator ensures that it spots all fakes produced by the generator, while the generator ensures that what it produces is as close to the authentic version as possible. Through a series of interactions, the rendered image is fine-tuned.

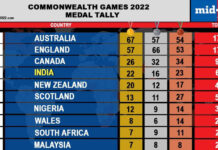

Sample images generated by Karnewar, all based on textual description from a data set

Sample images generated by Karnewar, all based on textual description from a data set

But GANs need data to get to work. Karnewar came across researchers at the University of Copenhagen and University of Malta, who were working on a project trying to achieve the reverse: Face to Text. On request, he received 400 randomly selected faces accompanied by text annotations.

“I thought this number might not be enough to train a network, but it’s worth a shot,” he recalls, adding, “But I was surprised and happy to see how much we achieved with just a handful. For example, if the textual description said blonde hair, the network always generated a golden colour, and it learned about complexions very soon.”

Sharp blur

A time lapse video on YouTube reveals how, over a series of interactions, the GAN generates images based on the textual description. The result is a set of heavily pixelated images which look more like a badly focussed photograph than a creation of AI technology. While Karnewar knows that there is a lot more work to be done before he can see Rachel’s face clearly, he is excited about the potential applications of the tool. “It can help casting directors find actors for roles, based on descriptions, and it can help law enforcement officials find victims or perpetrators,” he says. “On a larger scale, it can be used to render scenes and objects in 3D as well.”

To help take his project to the next step, Karnewar has made the project open source and shared the code on GitHub, the collaborative site for developers.

Courtesy: The Hindu